Overt expressions

Overt expressions are observable and externally visible displays of emotion communicated through facial expressions, body language, tone of voice, or verbal statements. They may be intentional or spontaneous, but they are perceptible to others and typically align with a person’s internal emotional state. Overt expressions play a central role in social communication by signaling feelings such as happiness, anger, fear, excitement, or sadness in ways that others can interpret, respond to, and emotionally synchronize with.

In psychology, overt expressions are distinguished from covert emotions, which are internal experiences that may not be outwardly displayed. Overt emotional displays are shaped by personality, emotional regulation skills, cultural display rules, and situational expectations. For example, a person may openly express enthusiasm in a social gathering but consciously regulate frustration in a formal setting. While overt expressions often reflect genuine emotions, individuals may sometimes amplify, suppress, or strategically manage them depending on social goals and relational dynamics.

Research in emotional psychology emphasizes that overt expressions are fundamental for empathy, trust-building, and relational attunement. They help others detect distress, share joy, interpret intentions, and coordinate behavior within groups. However, social norms influence how openly emotions are displayed, meaning that overt expressions must always be interpreted within context rather than in isolation.

Overt Expressions in Emotion AI

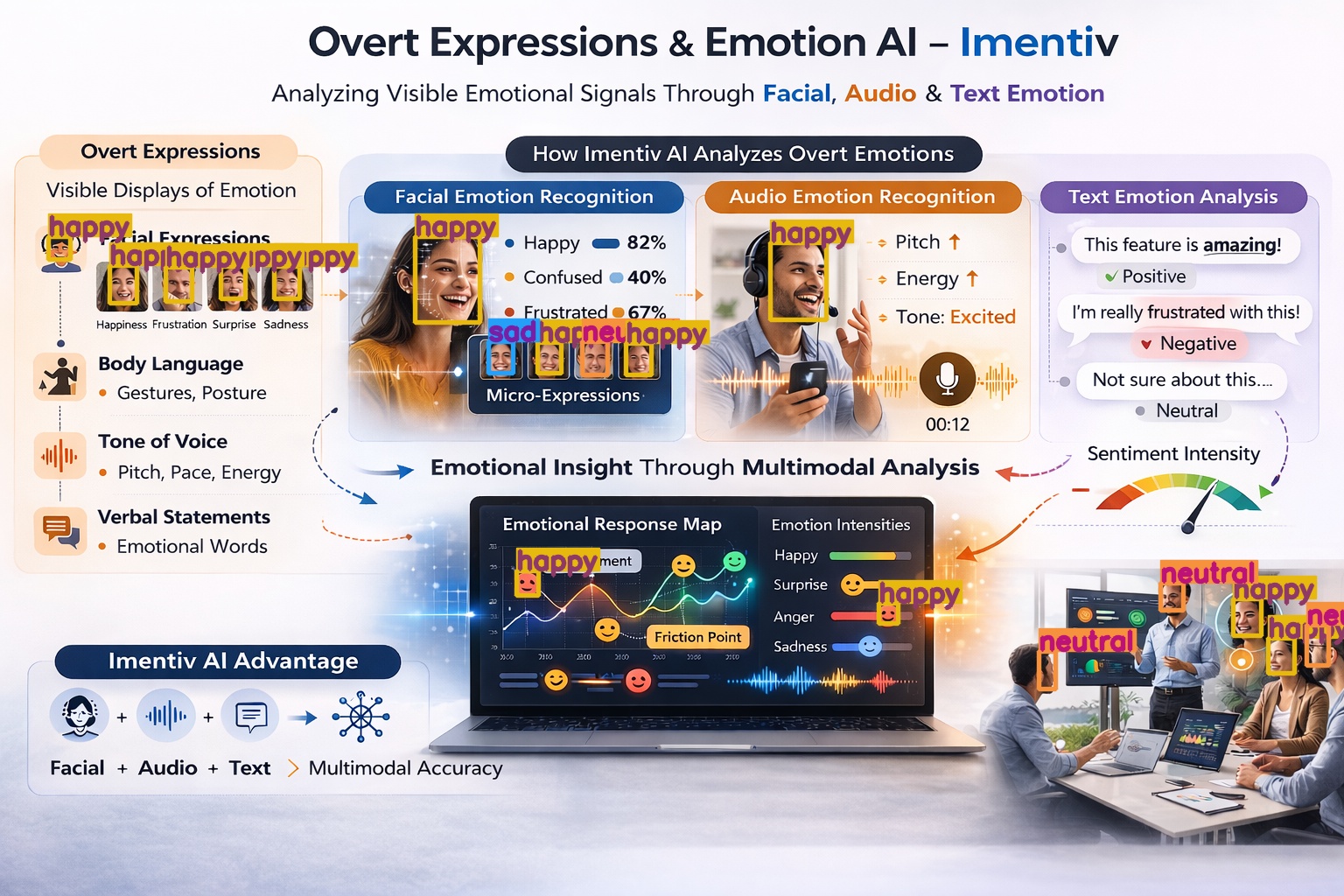

Because overt expressions are externally visible or audible, they are particularly measurable through Emotion AI systems. Multimodal analysis allows emotional signals to be interpreted more accurately by combining facial, vocal, and linguistic data.

Facial Emotion Recognition

Overt expressions frequently appear through visible facial muscle movements such as raised eyebrows, widened eyes, smiles, frowns, lip compression, or jaw tension. Emotion AI analyzes these patterns across video frames to identify emotional categories, intensity levels, and real-time shifts.In Imentiv AI , facial analysis captures both macro-expressions (clear emotional displays) and subtle micro-expressions, enabling researchers and organizations to understand authentic emotional reactions during interactions, content exposure, or behavioral assessments.

Audio Emotion Recognition

Tone of voice, pitch variation, speech rhythm, vocal energy, and pause patterns provide additional overt emotional cues. For example, excitement may appear through increased pitch and faster speech, while anger may be reflected in vocal sharpness or increased intensity.Imentiv AI evaluates these vocal signals to detect emotional intensity, congruence between speech and tone, and shifts in engagement or emotional regulation.

Text Emotion Analysis

Overt emotional expression can also appear linguistically through direct emotional statements, strong sentiment words, exclamation emphasis, or emotionally charged language. Text-based analysis identifies explicit emotional markers such as “I’m thrilled,” “This is frustrating,” or “I’m really disappointed.”In Imentiv AI , text emotion analysis evaluates emotional polarity, intensity, and contextual cues to understand how overt emotional language aligns with facial and vocal data when available.

By integrating facial, audio, and text emotion signals, Imentiv AI creates a comprehensive emotional map that strengthens interpretation accuracy and reduces the risk of misclassification.

Ethics and Responsible Use

Although overt expressions are externally observable, emotional interpretation must remain context-sensitive and ethically grounded. Imentiv AI is designed to support emotional insight, not to replace human judgment. Informed consent, data privacy, and responsible deployment are essential components of ethical Emotion AI use.

By combining psychological science with multimodal AI analysis, Imentiv enhances the understanding of overt emotional expressions across research, organizational, and user experience environments.