%201.jpg?alt=media&token=9b541a93-e4c4-48fe-b161-9727a8930b96?w=3840&q=75)

The Psychological Frameworks Powering Responsible Emotion AI

When you think about which LLM feels right for you — maybe it’s Gemini, Claude, or ChatGPT- it’s not always about accuracy or speed. You might prefer one because it understands your intent more intuitively, responds in a tone that feels natural, or simply makes you feel understood. These choices aren’t just technical; they’re psychological.

But text is only one layer of how humans communicate. We express ourselves through countless signals: a change in voice, a pause, a subtle shift in facial expression. To truly understand humans, technology must look beyond words and multiple cues of expression, deeper.

Why Emotion AI Needs Psychological Grounding

For any Emotion AI system to understand humans responsibly, it must be grounded in psychology. Today, there are many Emotion AI tools in the market. But when evaluating them, the real question to ask is: Which psychological frameworks are they built on?

Most systems that try to analyze emotions look for one thing — a facial change, a tone shift, or a word choice — and then label it. But human emotion is shaped by context, meaning, and personality. That’s why emotion analysis based on a single psychological theory, or a single data stream, often fails to hold up in the real world.

That’s why Imentiv AI integrates established psychological models into its foundation. These frameworks connect what the system detects to what psychology defines — creating a bridge between observation and understanding.

Let’s look at those frameworks one by one. Each contributes something distinct — one brings biological grounding, another explains meaning, another captures personality — and together they help our technology understand emotions with the same depth that psychology brings to human understanding.

1. Discrete Emotion Theory — The Expressive Foundation

Psychologists have long shown that some facial expressions are universal. Building on the work of Charles Darwin, researcher Paul Ekman demonstrated that emotions like happiness, sadness, anger, fear, surprise, disgust, contempt, and neutral states are biologically rooted and recognized across cultures. We evolved these expressions to quickly signal danger, safety, reward, or social approval. Because people consistently identify them the same way, these emotions remain one of the most reliable and practical frameworks for analyzing reactions in video.

Imentiv AI uses these visible facial expressions to understand how people respond moment by moment. It measures what emotions people show on their faces — not what they secretly think or feel.

For example, happiness often signals engagement, while fear may reflect uncertainty or concern. At the same time, we know emotions can be mixed, masked, or influenced by context. That’s why Imentiv AI looks at emotional patterns over time instead of treating a single expression as the full story, keeping the analysis realistic, responsible, and grounded in psychology.

2. Facial Action Coding System — Anchoring Analysis in Biology

In the 1970s, Paul Ekman and Wallace V. Friesen developed the Facial Action Coding System (FACS) to objectively describe facial muscle movements. FACS was designed as a systematic way to document what the face does at a muscular level, using a shared anatomical language for research. The system breaks facial expressions down into Action Unit s (AUs), each linked to a specific muscle movement — such as a cheek raise (AU6), lip corner pull (AU12), or brow lower (AU4). Emotions emerge from combinations of these movements, along with their timing, intensity, and symmetry — not from a single muscle action alone.

Please note that no single AU equals an emotion.

Imentiv AI uses FACS because it keeps facial analysis grounded in measurable biology rather than assumptions. By focusing first on observable muscle activity, the system avoids oversimplifying expressions into one-to-one emotional labels. Instead, it analyzes patterns over time and interprets them carefully and probabilistically.

Just as importantly, Imentiv does not claim to detect lies, judge character, infer intent, or make psychological diagnoses from facial data. FACS supports understanding visible behavior — not defining who a person is.

3. Big Five Personality Model (OCEAN) — Understanding Perceived Psychological Tone

The Big Five Personality Model — developed by Robert McCrae and Paul Costa — emerged from decades of research showing that personality traits across languages consistently group into five broad dimensions: Openness, Conscientiousness, Extraversion, Agreeableness, and Neuroticism (often described as emotional sensitivity). These dimensions describe tendencies, not diagnoses.

For example, Openness reflects curiosity and imagination, Conscientiousness relates to discipline and control, Extraversion signals social energy, Agreeableness reflects warmth and cooperation, and Neuroticism captures emotional reactivity and stress sensitivity. The model is widely validated, cross-culturally stable, and designed to describe personality in a neutral, non-clinical way.

Imentiv AI uses this framework carefully and responsibly in its audio-visual, face-based personality analysis. It does not claim to detect someone’s fixed or lifelong personality traits. Instead, it analyzes observable facial expressions, vocal patterns, and emotional responses over time to understand perceived psychological tone — how a person’s behavior may come across in a given context.

The Big Five works well for this because it is non-diagnostic, avoids stigmatizing labels, and aligns naturally with emotional pattern analysis. Imentiv AI provides structured insights into behavioral tone by examining emotional stability, intensity, vocal modulation, and repeated affective responses — not judgments about a person’s identity.

4. Plutchik’s Wheel of Emotions — Mapping Emotional Complexity

Psychologist Robert Plutchik introduced the Wheel of Emotions to show that emotions are not isolated labels — they vary in intensity and often combine to form more complex states. His model maps eight primary emotions — joy, trust, fear, surprise, sadness, disgust, anger, and anticipation — arranged in opposing pairs. Just like colors blend, emotions can mix to create richer experiences, such as love (joy + trust) or awe (fear + surprise). The model also shows how emotions can intensify or soften, adding depth beyond simple categories.

At Imentiv AI, Plutchik’s model helps to detect emotional blending and escalation, capturing progressions that single-frame or categorical analysis might miss. It helps understand nuanced responses in natural settings by observing how emotions combine and shift over time — whether in video, audio, or text. This framework ensures that emotional analysis reflects real-world human complexity, providing a deeper, more context-aware view of emotions and interactions while keeping interpretation scientifically grounded.

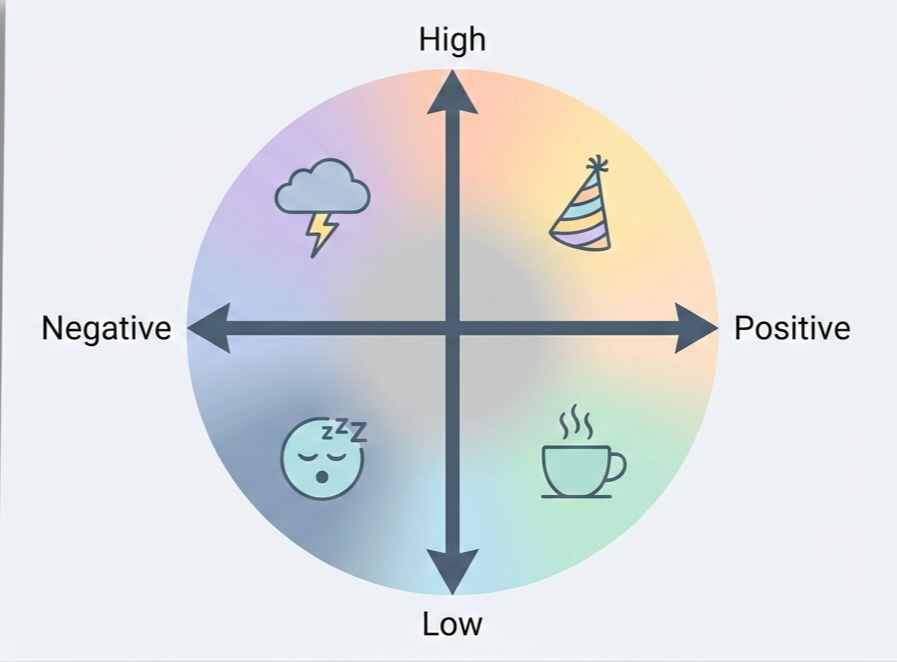

5. Circumplex Model of Affect — Understanding Emotion in Motion

In 1980, psychologist James A. Russell proposed the Circumplex Model of Affect after observing that people don’t experience emotions as isolated boxes like “happy” or “sad.” Instead, emotional experiences consistently organize along two continuous dimensions: valence (how pleasant or unpleasant something feels) and arousal (how activated or energized a person is). Rather than asking “Which emotion is this?”, the model asks: How positive or negative is it? And how intense is it? This reflects real human experience — emotions shift gradually, overlap, and vary in strength.

The model is visualized as a circular space where emotions sit based on their valence and arousal levels. High-positive arousal might reflect excitement or enthusiasm, while high-negative arousal could signal stress or anger. Low-negative arousal may indicate sadness or fatigue, and low-positive arousal often reflects calmness or contentment. Intensity increases as emotions move further from the neutral center.

Imentiv AI uses this framework because it avoids rigid labeling, captures mixed or subtle states, and tracks emotional shifts over time across video, audio, and text. Most importantly, it aligns with how emotions actually unfold — fluid, graded, and context-dependent rather than fixed categories.

6. Cognitive Appraisal Theory — Understanding Emotion Through Meaning

Psychologists such as Richard Lazarus and Klaus Scherer showed that emotions don’t come directly from events — they come from how we interpret those events. According to Cognitive Appraisal Theory, we constantly evaluate what’s happening around us: Does this matter to me? Does it help or block my goals? Can I control it? Is it fair? What will happen next? These mental evaluations shape how we feel. That’s why the same situation can trigger excitement in one person and anxiety in another. Emotion, in this view, is a meaning-based response, not just a facial expression or physical reaction.

This framework is especially important for text analysis. Language captures thought, judgment, and reflection — emotions like confusion, pride, remorse, sarcasm, envy, or admiration often appear in words rather than on the face. Imentiv AI uses Cognitive Appraisal Theory to interpret these meaning-driven emotional signals instead of relying on simple positive/negative sentiment labels. Once identified, these text-based emotions are mapped into the valence–arousal framework to align with video and audio insights.

At the same time, Imentiv AI maintains clear boundaries: emotional language does not equal intent, belief, or diagnosis. Text emotions are contextual signals that help explain perspective — not definitive statements about a person’s psychological state.

Wrapping Up

Emotion is complex. It cannot be reduced to a single label, signal, or moment. It involves biology, cognition, expression, intensity, and context working together.

Imentiv AI is designed as a support tool grounded in scientific research by integrating established psychological frameworks — from FACS and the Big Five to the Circumplex Model and Cognitive Appraisal Theory. It functions as an analytical support tool. It organizes observable signals across face, voice, and text into interpretable emotional patterns grounded in research.

This foundation ensures that outputs reflect measurable behavior and contextual meaning, not assumptions about intent, character, or diagnosis.

Download our Whitepaper for an in-depth view of Imentiv AI’s research, capabilities, and real-world use cases.

Discover more about Imentiv AI here .

Disclaimer:

Imentiv AI is a supportive analytical tool designed to provide insights into human emotions. It does not diagnose, predict behavior, define personality, or replace human judgment. Its outputs should be interpreted as contextual, probabilistic signals rather than definitive conclusions. Emotional and personality analyses serve as guides to understanding patterns and trends, not absolute truths, and should be used ethically, responsibly, and with respect to cultural and situational context.